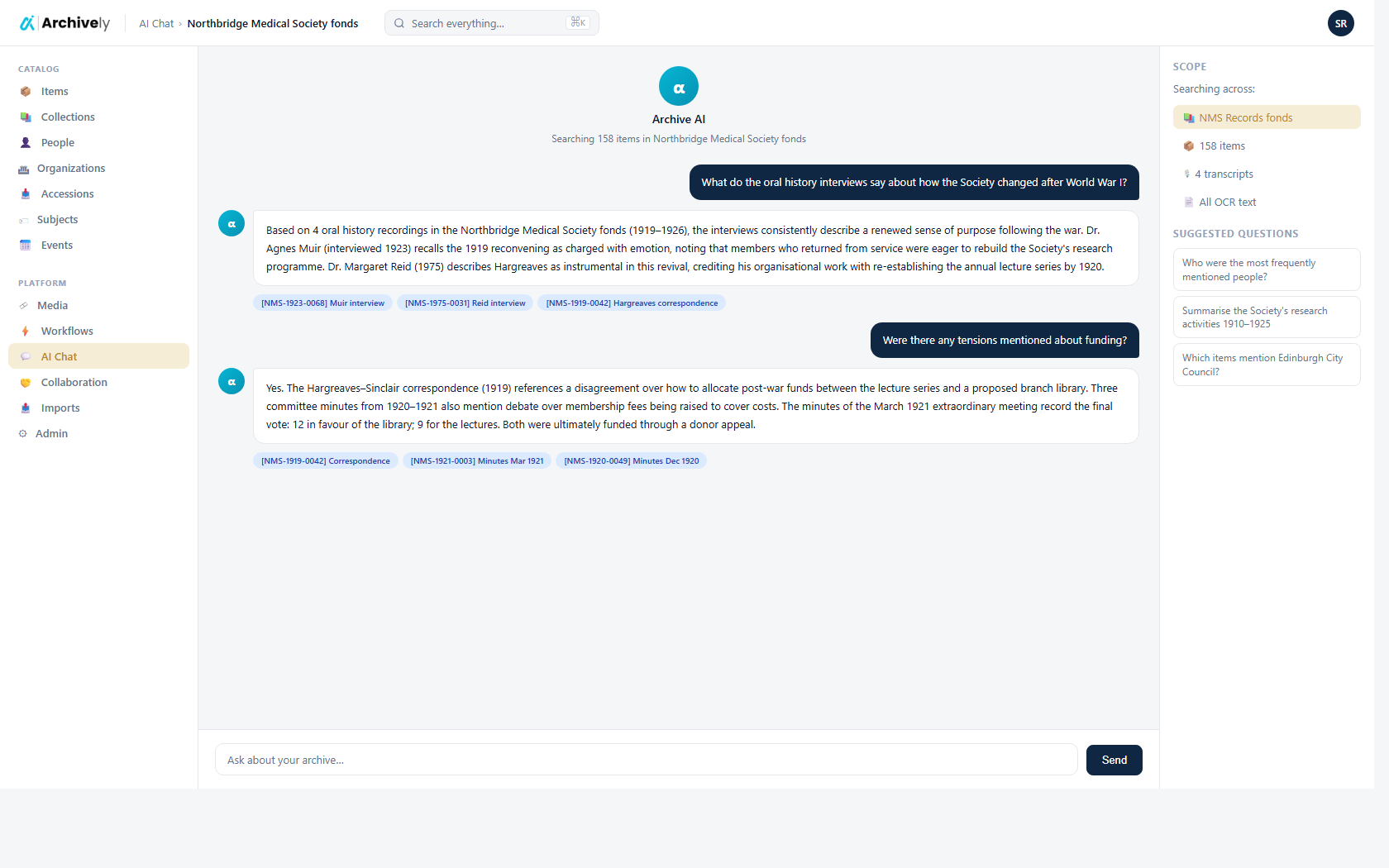

AI chat

Ask your archive anything — it will cite its sources.

A retrieval-augmented chat that never steps outside your collection. Every answer links to the item, page, or timestamp where it came from. No hallucinations, no content drift.

pgvector retrievalOpenAI embeddings

- Grounding

- Your records only

- Citations

- Every claim

- Scope

- Per-tenant

archively.ai/chat

Grounded in your holdings. Nothing else.

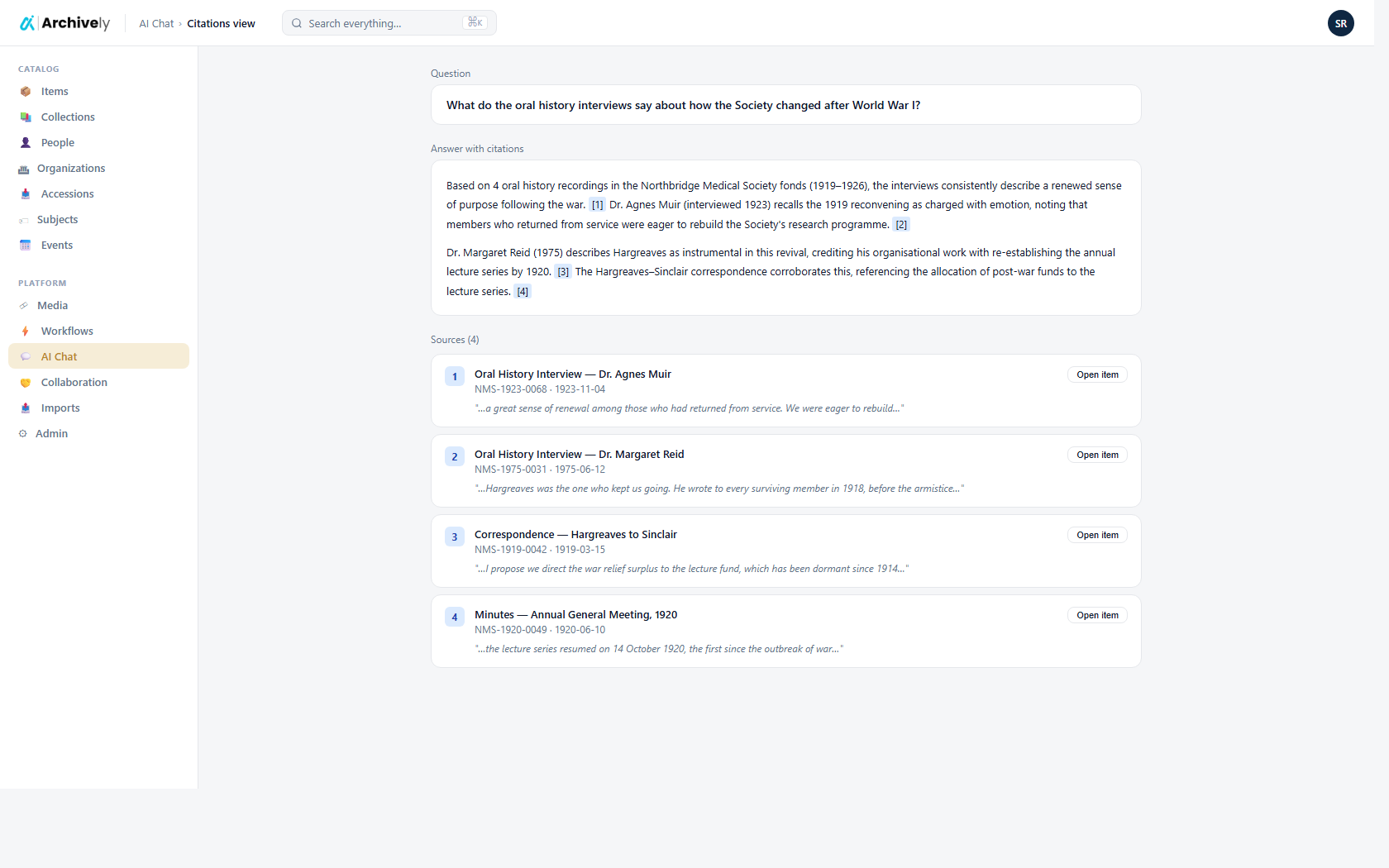

Semantic retrieval over every published record, transcript, and OCR text. The model writes an answer only from what it retrieved — and cites every claim to a source.

- Cites item, page, and (for media) exact timestamp

- Filter chat scope to a fonds, a series, or a date range

- No external web calls in answer generation

archively.ai/chat/c-0014

Researcher-grade controls.

Switch between strict (grounded-only) and exploratory modes. Pin sources. Export the whole conversation with inline citations for a research note.

- Strict / exploratory modes

- Pin and quote specific records

- Export Q&A with bibliography to Markdown

Safer than general chat.

Because retrieval is scoped to your published layer, the model can't answer from drafts, embargoed material, or public-web noise. Privacy and accuracy by construction.

- Respects embargo and access-restriction flags

- Curator-configurable sensitive-record masking

- Every Q&A logged for audit

Ready to see it on your collection?

Load a few records. Run the AI. Review and publish — before lunch.